What happens if we maximize helpfulness + shitposting, while reducing positivity?

Now with 70B PARAMATERS! 💪🐸🤌

Following the discussion on Reddit, as well as multiple requests, I wondered how 'interesting' Assistant_Pepe could get if scaled. And interesting it indeed got.

It took quite some time to cook, reason was, because there were several competing variations that had different kinds of strengths and I was divided about which one would make the final cut, some coded better, others were more entertaining, but one variation in particular has displayed a somewhat uncommon emergent property: significant lateral thinking.

6th of April, 2026 Update post benchmarks

Independently evaluated via the UGI benchmark, Assistant_Pepe_70B was ranked 1st in the world, combining exceptional intelligence and instruction-following capabilities with next to no censorship whatsoever.

Moreover, Assistant_Pepe_70B outperforms the base meta-llama/Llama-3.3-70B-Instruct (31.37 NatInt) and meta-llama/Llama-3.1-70B-Instruct (30.87 NatInt), outperforms mistralai/Mistral-Large-Instruct-2411 in overall UGI, and nearly matches it in raw intelligence (36.21 vs. 35.25)!

These recent findings substantially strengthen the ideas and speculations regarding 4chan data as discussed on Reddit (which were about the 8B variant, that also widely surpassed expectations, against all common sense).

- Some history: My first 70B tune, Negative_LLAMA_70B, achieved the highest score in the world on 13/01/2025 for 70B models. It birthed more than 400 merges (a time before HuggingFace had a limited storage policy), now sitting at around 300.

I rarely tune 70B models, but when I do, I try to make it count (because of storage, compute, and respect for your bandwidth). This one definitely did.

Lateral Thinking

I asked this model (the 70B variant you’re currently reading about) 2 trick questions:

- “How does a man without limbs wash his hands?”

- “A carwash is 100 meters away. Should the dude walk there to wash his car, or drive?”

ALL MODELS USED TO FUMBLE THESE

Even now, in March 2026, frontier models (Claude, ChatGPT) will occasionally get at least one of these wrong, and a few month ago, frontier models consistently got both wrong. Claude sonnet 4.6, with thinking, asked to analyze Pepe's correct answer, would often argue that the answer is incorrect and would even fight you over it. Of course, it's just a matter of time until this gets scrapped with enough variations to be thoroughly memorised.

Assistant_Pepe_70B somehow got both right on the first try. Oh, and the 32B variant doesn't get any of them right; on occasion, it might get 1 right, but never both. By the way, this log is included in the chat examples section, so click there to take a glance.

Why is this interesting?

Because the dataset did not contain these answers, and the base model couldn't answer this correctly either.

While some variants of this 70B version are clearly better coders (among other things), as I see it, we have plenty of REALLY smart coding assistants, lateral thinkers though, not so much.

Also, this model and the 32B variant share the same data, but not the same capabilities. Both bases (Qwen-2.5-32B & Llama-3.1-70B) obviously cannot solve both trick questions innately. Taking into account that no model, any model, either local or closed frontier, (could) solve both questions, the fact that suddenly somehow Assistant_Pepe_70B can, is genuinely puzzling. Who knows what other emergent properties were unlocked?

Lateral thinking is one of the major weaknesses of LLMs in general, and based on the training data and base model, this one shouldn't have been able to solve this, yet it did.

Note-1: Prior to 2026 100% of all models in the world couldn't solve any of those questions, now some (frontier only) on ocasion can.

Note-2: The point isn't that this model can solve some random silly question that frontier is having hard time with, the point is it can do so without the answers / similar questions being in its training data, hence the lateral thinking part.

So what?

Whatever is up with this model, something is clearly cooking, and it shows. It writes very differently too. Also, it banters so so good! 🤌

A typical assistant got a very particular, ah, let's call it "line of thinking" ('Assistant brain'). In fact, no matter which model you use, which model family it is, even a frontier model, that 'line of thinking' is extremely similar. This one thinks in a very quirky and unique manner. It got so damn many loose screws that it hits maximum brain rot to the point it starts to somehow make sense again.

Have fun with the big frog!

TL;DR

- Actually outperforms frontier models in stupid trick questions on occasion.

- Banters exquisitely, and very fun to talk to!

- NO SYSTEM PROMPT REQUIRED!

- Will go HARD roasting you! Thanks to a

subtlenegativity bias infusion. - Unique lateral thinking, and it shows!

- Excellent and extremely creative writer.

- Ships code.

- Excellent long context, thanks to Llama 3.1 70B base. Expect superb coherency at 32K, and very good coherency even at 64K!

- Possibly more degenerate than the 8B version.

- Massive swipe-diversity.

- Inclusive toward amphibians.

Model Details

Intended use: Shitposting, General Tasks.

Censorship level: Almost none

9.5 / 10 (10 completely uncensored)

UGI score:

Ranked #1 in the world for 70B models:

Top 10 UGI in the world, across all rated models at any size, including closed source frontier:

Available quantizations:

- Original: FP16

- GGUF: Static Quants

- GPTQ: 4-Bit-128 AutoRound

- Specialized: FP8

Generation settings

Recommended settings for assistant mode:

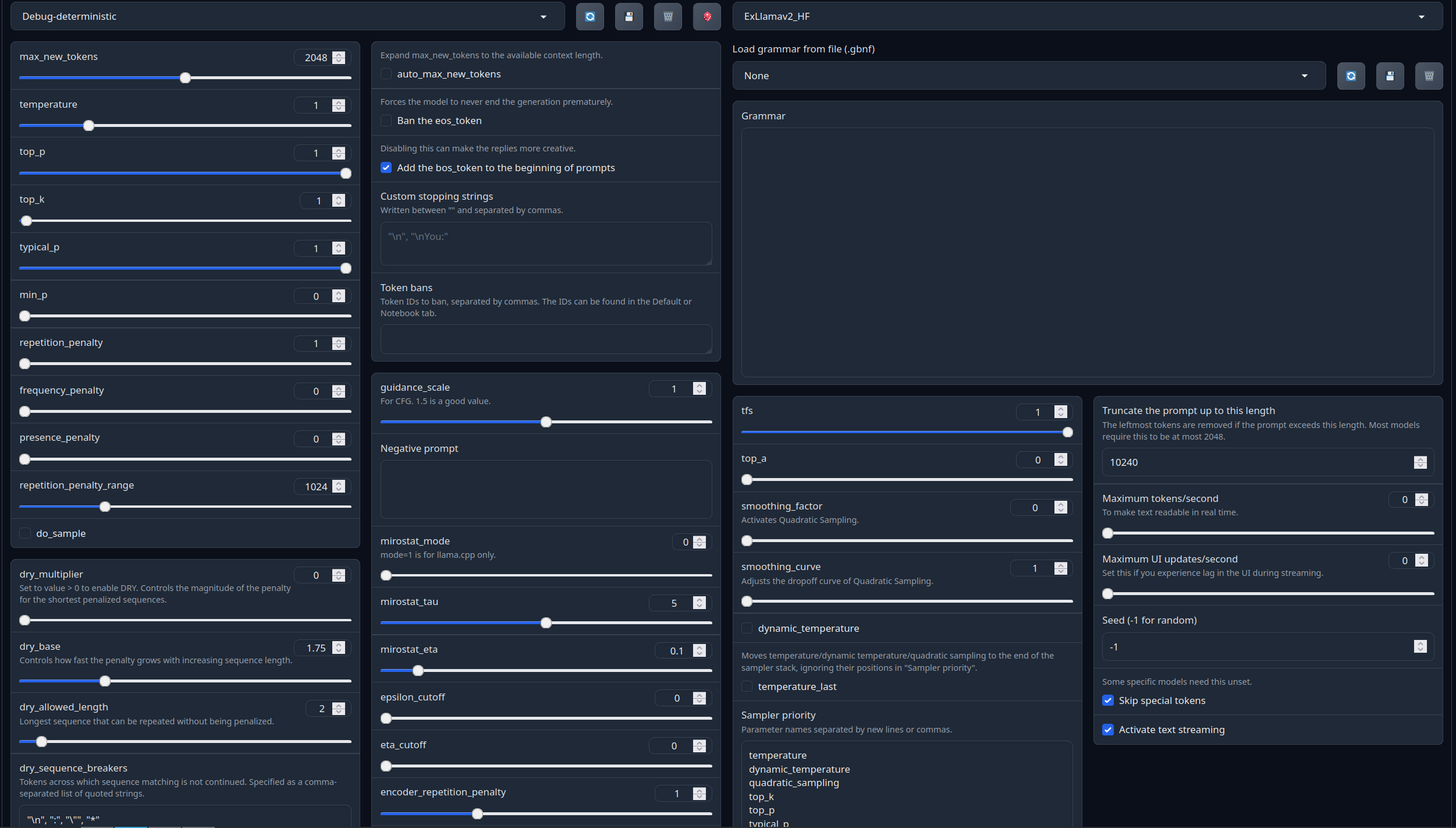

Full generation settings: Debug Deterministic.

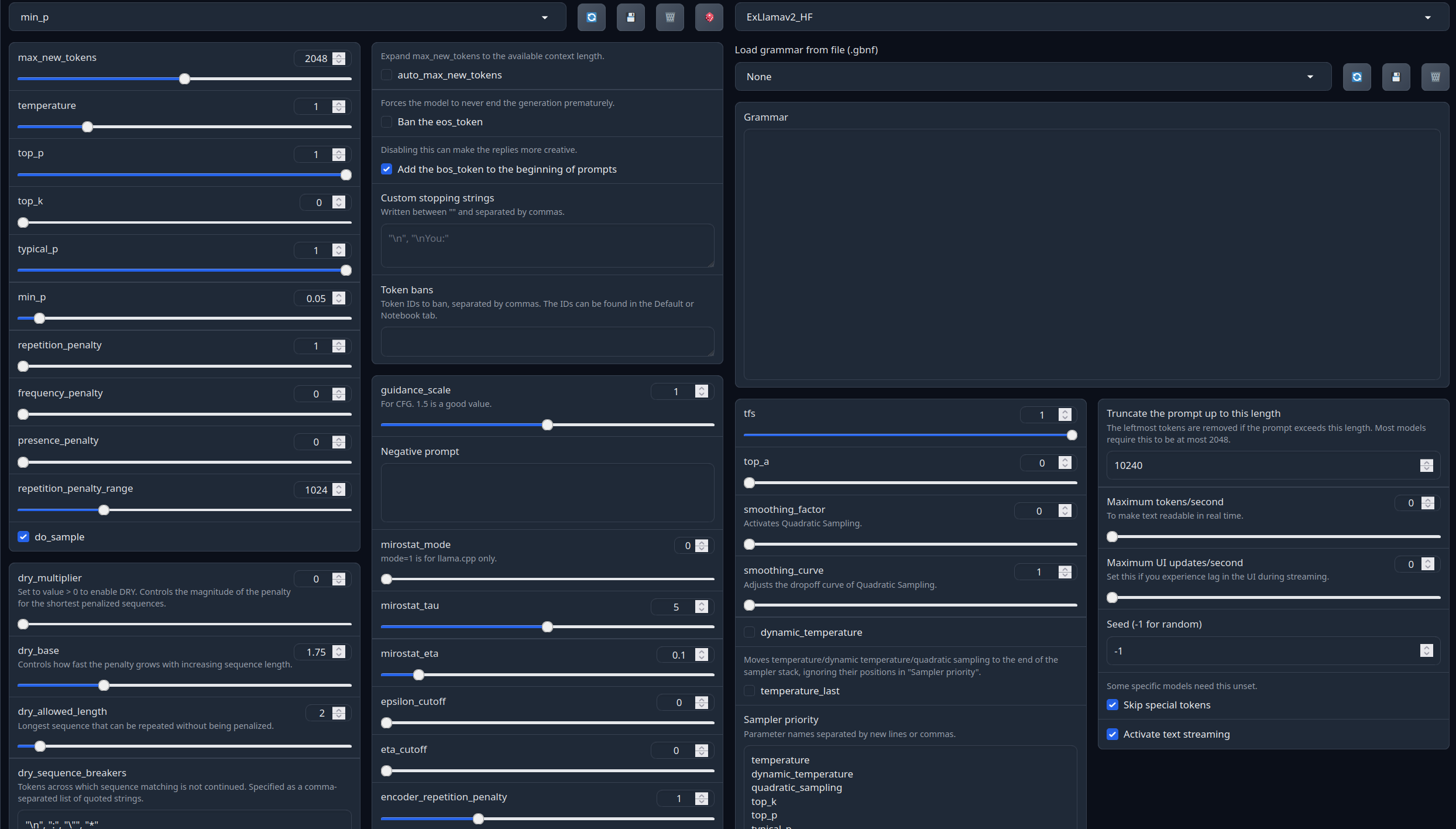

Full generation settings: min_p.

Chat Examples:

Chat Examples (click below to expand)

The 2 trick questions, zero shotted (how one without limbs washes hands, car wash)

A man lives on the 30th floor...

Another lateral thinking example + another take of the previous question

Pirate shitpost

Are you Elon Musk?

What do you think about...

1-Shot TempleOS 2.0 and a hymn to St.Terry

Model instruction template: Llama-3-Instruct

<|begin_of_text|><|start_header_id|>system<|end_header_id|>

{system_prompt}<|eot_id|><|start_header_id|>user<|end_header_id|>

{input}<|eot_id|><|start_header_id|>assistant<|end_header_id|>

{output}<|eot_id|>

Your support = more models

My Ko-fi page (Click here)Citation Information

@llm{Assistant_Pepe_70B,

author = {SicariusSicariiStuff},

title = {Assistant_Pepe_70B},

year = {2026},

publisher = {Hugging Face},

url = {https://huggingface.co/SicariusSicariiStuff/Assistant_Pepe_70B}

}

Other stuff

- Impish_LLAMA_4B the “Impish experience”, now runnable on spinning rust & toasters.

- SLOP_Detector Nuke GPTisms, with SLOP detector.

- Downloads last month

- 1,050